OpenAI's DALL-E model for generating images was made available a few months ago. One of its uses is to generate art, and it's already being used by magazines and blogs to supply images for articles. I tried it out for supplying images for articles on my own blog. The results are funny, but not useful.

Before showing the examples, it's important to note that the way that the DALL-E engine works is to take "prompts" and then generate images based off those prompts. "Prompt design" is very important for the output. A recommended way of doing this is to specify what style the image should be in, whether it's "digital art", "Matisse" or "line art".

The best result is the one shown at the top of this blog post, which is for the prompt "regulatory ladders showing the disadvantages of over-regulation in the style of monet". This is the kind of image that really could be put in a blog post and would add to it.

Generating Images For Blog Posts

What about other prompts? They illustrate that robots will not be taking the jobs of illustrators any time soon for many kinds of content.

Legal Debt: The Lawyer's Technical Debt in the style of matisse

an audio guide for the business of law in the style of bladerunner

stablecoins vs. Canadian dollar CBDC in digital art style

Canada's money supply increasing on a graph in the style of the economist, showing a doubling over 12 months

Limitations Of Prompt-Based Image Generation

Someone familiar with prompt-based image systems would probably say that the above examples are unfair and that I ought to have written better prompts. But there's no better prompt for the subjects above, they're inherently difficult to illustrate and especially so with this sort of image model. The "regulatory ladder" illustration in the style of Monet at the top is quite amazing, and would be a great choice of image. DALL-E (and similar models) have their place, and they're a spectacular demonstration of how far machine-learning systems have come in the generation of images, but they're not really "AI" in the sense that they act intelligently. But if the user is reasonable in their expectations, the output is fantastic, such as the image below for "a judge in court, pixel art".

![]()

Even more challenging examples can be rendered quite well, such as "A fireplace burning money while people gather around the fire laughing, pixel art".

Confusing Outputs

Many of the images produced by DALL-E are gibberish or confusing. They have important details that are wrong in a way that a human wouldn't. For example, the image below for "a scale weighing good and evil" but the image title is "VLAL". Why? Who knows.

It's not hard to generate odd images with DALL-E. The image below is "Superman crushing a car with his hands, digital art style". Why is Superman's cape backwards?

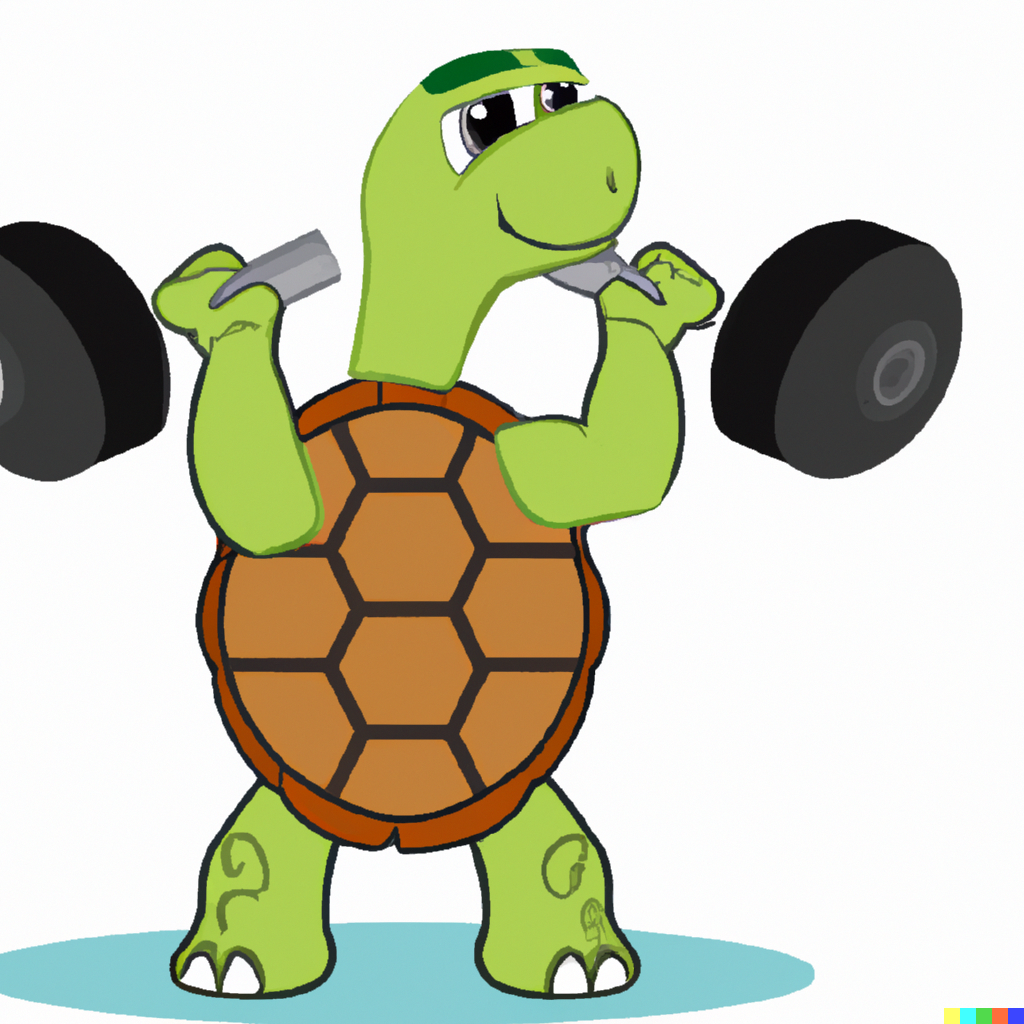

DALL-E images are very often disconnected from a model of how the world works, because they're actually produced by a collection of images and texts, not a causal model. This can be seen in images like "a turtle working out at the gym lifting weights", shown below.

Even a game like Chess, with many representations online that must have been fed into DALL-E, can't be rendered properly. The first prompt below is "a duck playing chess digital art". The chessboard is 6x5 (instead of 8x8) and doesn't seem to be checkered. The pieces aren't normal Chess pieces either. Further down is the simpler "a duck playing chess".

DALL-E really falls flat on simple examples without context. For example, the image below is the first output for the prompt "justice". I don't know what image someone would expect for "justice" but probably not that.

Practical Uses

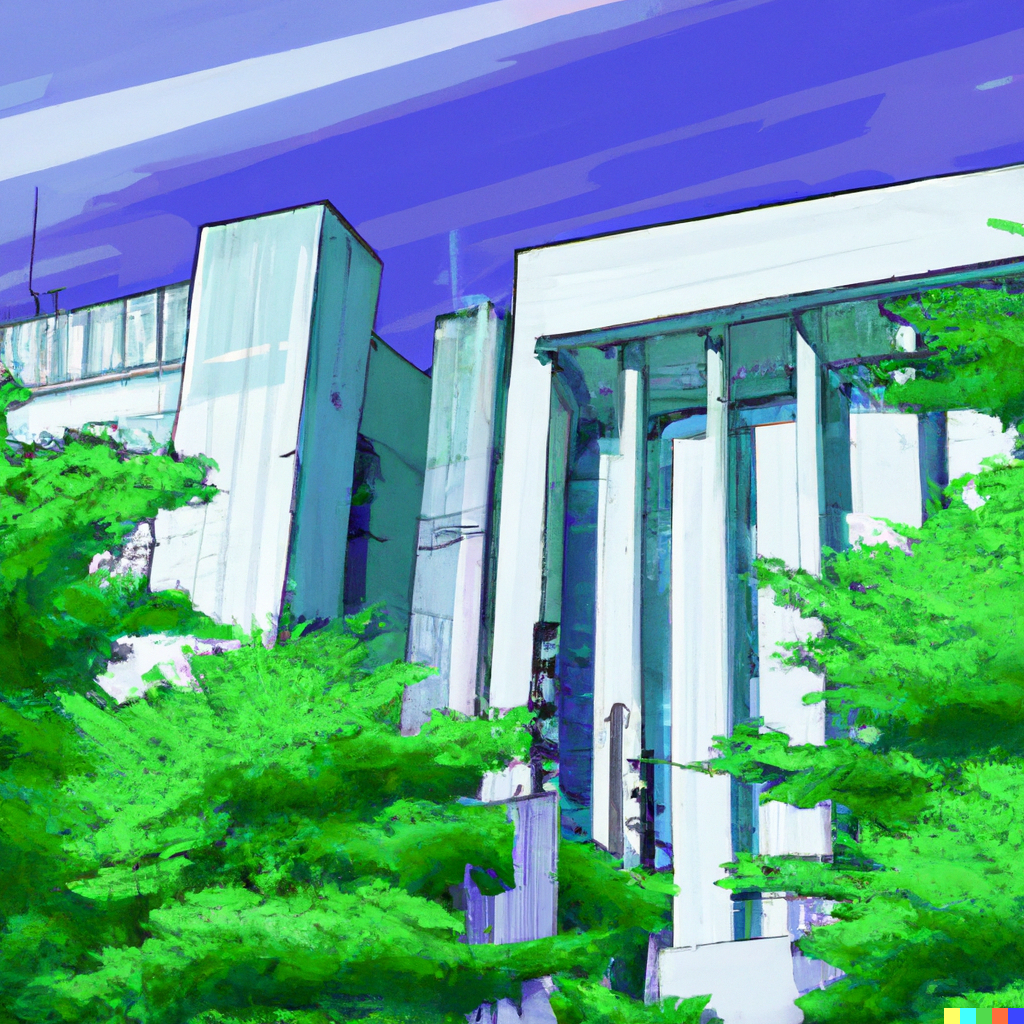

DALL-E is great for generating images of generic ideas. It's especially good at futuristic/digital art image styles. For example, the image below is "a courthouse with tall trees in Neo Tokyo, digital art style". The result would be a reasonable product for an actual artist to produce. And the cost using DALL-E is pennies. So for the right kind of content, DALL-E images (or the newer versions of this approach that are rapidly coming online) can be a great way to illustrate the content and brighten up blog posts.

Copyright Issues

DALL-E was built by scanning billions of images. The content it produces is really an amalgam of the existing images generated by people. It's possible, for some prompts, to generate images that draw significantly from a single image, which may pose copyright problems. A similar tool for generating software code has recently come under fire for producing copyrighted code, as detailed here: https://githubcopilotinvestigation.com. This is a new area for copyright law, but the use of copyrighted image and text is itself quite old, and if these tools are generating verbatim or near-verbatim versions of copyrighted content then that's going to be a problem for the future use of these tools and their creators.